5 quick questions on IEC 82304

{fastsocialshare}

Christian Kaestner at Qadvis, is an expert company offering quality and regulatory services for Medtech companies, and co-author of the upcoming standard "IEC 82304-1: Health Software - General Requirements for product safety." We were fortunate to get a few minutes of his time to answer five quick questions about the standard and its implications.

What is IEC 82304 all about?

"The purpose of the standard is to establish product requirements for standalone software, i.e. software products not running on dedicated hardware. IEC 82304-1 could be seen as an equivalent to IEC 60601-1 with the difference that the latter includes dedicated hardware for the software to run on. (And of course have a lot of hardware requirements!)"

But isn't this already covered by IEC 62304?

"No, IEC 62304 is a process standard and as such “only” define expectations on activities and their corresponding outputs. The key scope of IEC62304 is the lower part of the traditional V-model while IEC 82304-1 takes the complete product lifecycle into account. This means that IEC 82304-1 establish product requirements which aren’t addressed by IEC 62304, examples:

- Product requirements, like intended use and accompanying documents.

- Product validation

- Decommissioning of software (and its data!)

IEC 62304 is of course referenced by IEC 82304-1 for the development of the actual software."

Which companies are affected by IEC 82304? How can I find out if my company is affected?

"The scope of the standard is health software which is a broader term than medical device software. So, I would suggest any company working with medical device software (standalone) or software in the surrounding of medical device products to have a look at the standard. As a rule of thumb; you could say if your company develops software that might have an impact on the health/wellbeing of a human, I would say you are affected.

Below some examples which I believe should be included in the scope of health software:

- Prescription management systems - If the data is mixed up in any way it may result in wrong doses or even wrong medication to a patient.

- App keeping track of your medication (as one example) - If you use the app to avoid forgetting your medication or by mistake take it twice. This would of course not be good follow the instructions and the instructions are wrong.

- Software tracking “safe periods” to avoid pregnancy - Assuming the software counts wrong and gives green light when it shouldn’t… I can imagine the surprise in a month or so isn’t very popular!"

What is your advice to companies that need to implement IEC 82304?

"To be fair; all standards are voluntary so there is no requirement or need to comply with IEC 82304-1. But as for all other standards, it is a good praxis and gives your company a quality mark otherwise hard to claim.

My advice to companies with regards to IEC 82304-1 is to take advantage of it; it will support you in what is required to make standalone software to a product."

When will the standard be released and where can I get a copy?

"So far, the standard isn’t published, it is currently out for review (DIS, draft international standard). Depending on the result of the voting, an FDIS may be ready in Q2 2016. Usually, the difference between an FDIS and the final version should be marginal. The final version can probably be expected in late Q3 2016. (But, it all depends on the results from the voting process.)

Should you be interested already today, you can purchase a copy of the draft standard at www.iso.org."

If you want to know more about how to prepare for IEC 82304-1, contact Christian Kaestner at

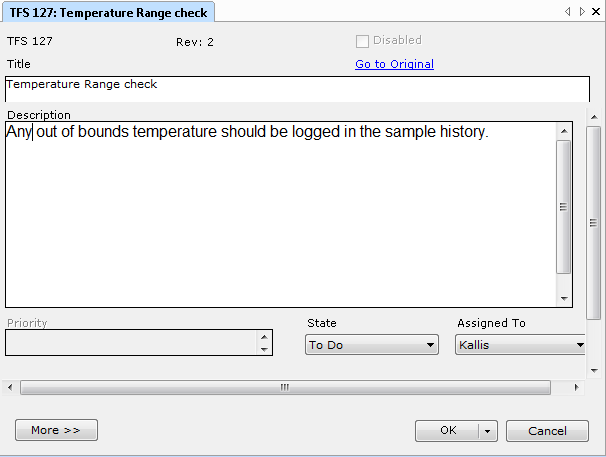

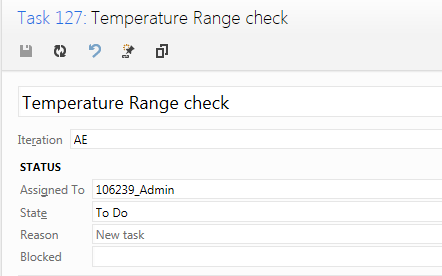

Learn more about how Aligned Elements can help you support IEC 62304

Request a live demo and let us show you how Aligned Elements can help you to comply to IEC 62304